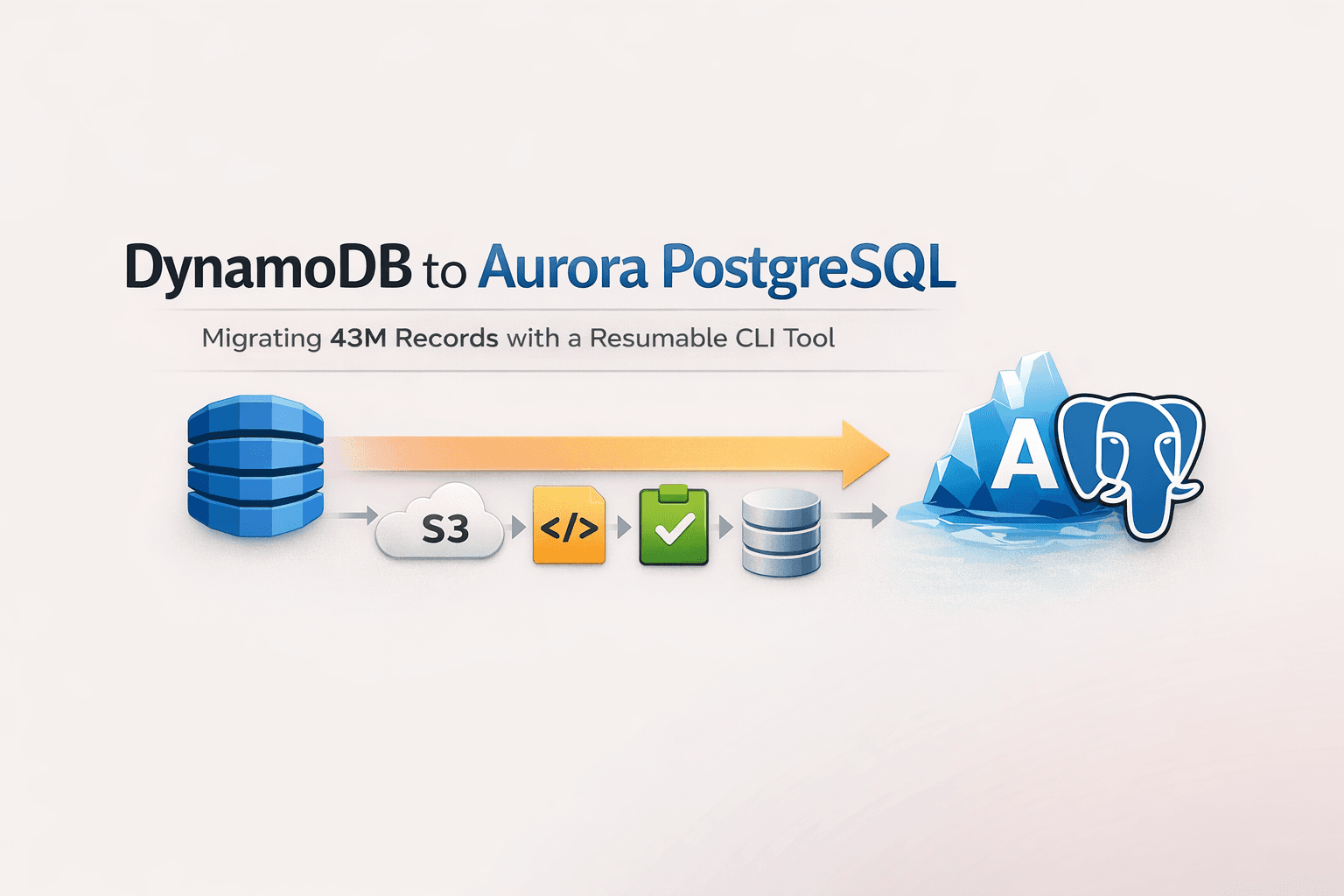

DynamoDB to Aurora PostgreSQL: Migrating 43M Records with a Resumable CLI Tool

TL;DR

Devoted husband, proud father, son, brother, and passionate software engineer, in that meaningful order.

Migrated 43 million records from DynamoDB to Aurora PostgreSQL using a resumable CLI migration tool built in Node.js (TypeScript).

Final runtime: 2h15m

Rows inserted: 12.2M

Errors: 0

Idempotent design, file-level checkpointing, and predictable restart behavior made the system reliable under failure.

No scaling miracle. Just calm engineering.

Who This Is For

This guide is for engineers who need to migrate large datasets between databases and want a system that handles failure gracefully. You'll find it useful if you've dealt with partial writes, crashed processes, or the anxiety of restarting a migration at 3am.

Context: Large-Scale DynamoDB to Aurora PostgreSQL Migration

I've had to run this kind of migration more than once.

Data moves. Architectures evolve. What was "good enough" two years ago often isn't anymore.

Eventually someone says: "We need to migrate this."

I don't decide that part. But I'm usually the one responsible for making sure it finishes, doesn't duplicate data, and doesn't explode at 3am.

Migration Context

| Property | Value |

| Source | DynamoDB |

| Target | Aurora PostgreSQL |

| Table size | 43,378,852 items |

| Data size | ~8.3 GB |

| Export format | DynamoDB Export to S3 |

The export produced 16 gzipped JSON files, each between 150–166 MB.

On paper: Read → Transform → Insert.

In practice, data migration is a reliability problem.

DynamoDB to PostgreSQL Migration Challenges

Long-running migrations fail in many ways:

Process crash

Partial writes

Network interruption

DB timeouts

Infrastructure restart

Malformed rows

The real questions become:

What was written?

What wasn't?

Can I restart safely?

Will I duplicate data?

After dealing with this uncertainty multiple times, I stopped treating migration as a scripting problem. It's a failure-handling problem.

Why AWS Lambda Failed for Long-Running Data Migration

My first attempt used AWS Lambda. It looked clean: stateless execution, easy scaling, no servers, chunk-based processing.

But migrations run long. Very long.

Problem: 30-Minute Timeout

Lambda's timeout forced me to build logic to detect near-timeout, persist checkpoint, and reinvoke itself.

Technically possible. Operationally? Messy.

Operational Issues

Logs fragmented across executions

Difficult to understand global progress

Hard to correlate runs

CloudWatch streams everywhere

Monitoring became painful

More orchestration logic than migration logic

Instead of solving data migration, I was building a mini scheduler. That's clever in the wrong direction.

So I killed it.

Final Approach: A Boring, Controlled CLI Migration Tool

I switched to a long-running CLI process in Node.js (TypeScript).

No reinvocation logic. No distributed coordination. No orchestration complexity.

Just: Stream file → Parse → Transform → Batch → Upsert → Log → Repeat.

Sometimes boring is better.

Designing an Idempotent Migration System

Before writing the tool, I defined one rule:

If I restart the migration from the beginning, the final database state must be identical.

This simplified everything. Instead of trying to eliminate duplicate work entirely, I made duplicate work harmless.

Implementation

The core of idempotency relies on PostgreSQL's ON CONFLICT clause:

sql

INSERT INTO target_table (id, data, updated_at)

VALUES ($1, $2, $3)

ON CONFLICT (id) DO UPDATE SET

data = EXCLUDED.data,

updated_at = EXCLUDED.updated_at;

Key elements:

Deterministic primary key derived from source ID

PostgreSQL

ON CONFLICT DO UPDATEfor upsert-based writesNo external state required to determine if a row was already processed

That means:

Retrying a batch is safe

Restarting the whole process is safe

Reprocessing a file is safe

Even if something runs twice, the final state is consistent. That removed most of the stress.

Checkpoint Strategy: File-Level Instead of Row-Level

I considered row-level checkpointing. It adds offset storage, metadata persistence, edge-case complexity, and more reasoning under failure.

Instead, I chose file-level checkpointing.

Each S3 file is:

Processed entirely

Marked complete only after success

If the process crashes halfway through a file, the file is reprocessed from the beginning. Because the system is idempotent, this is safe.

Less precise. Much simpler. Much more predictable.

Handling Bad Records During Bulk Inserts

Large DynamoDB exports always contain problematic records.

If a batch insert fails:

Split batch in half

Retry each half

Keep splitting

Isolate the bad record

Skip it

Everything else continues. One bad row should not stop hours of work.

Streaming Strategy for Large JSON Exports

Each .json.gz file is:

Streamed directly from S3

Decompressed on the fly

Parsed line by line

Inserted in batches of 500

Why Not Parallelize?

I intentionally used single worker, sequential file processing.

Yes, parallel execution would increase throughput. But it complicates restart logic, pressure control, and Aurora resource stability.

Predictability > maximum throughput.

Real Migration Execution Metrics

Here's one full production run:

| Metric | Value |

| Duration | 2h15m |

| Batch size | 500 |

| Files processed | 16 / 16 |

| Items scanned | 37,783,238 |

| Rows inserted | 12,205,544 |

| Skipped | 0 |

| Errors | 0 |

Why 37M Scanned but Only 12.2M Inserted?

Not every DynamoDB item mapped directly to a PostgreSQL row. The source data used a single-table design where multiple entity types (users, sessions, events) lived in one table with composite keys.

For the target relational model:

Some rows were grouped (e.g., denormalized event sequences → single aggregate row)

Some entity types weren't needed in PostgreSQL

Only target-valid entities were inserted

Everything else was ignored by design. No errors. No manual cleanup afterward.

Aurora PostgreSQL Performance During Migration

I monitored Aurora CloudWatch metrics throughout the run.

Observed Behavior

| Metric | Value |

| Commit throughput | ~3–6 commits/sec |

| Commit latency | ~200–300µs |

| Replica lag | ~15–25 seconds during peak bursts |

| CPU credit usage | Stable |

| Buffer cache hit ratio | ~100% |

Nothing exploded. No replication issues. No WAL pressure disaster.

Batch size of 500 turned out to be a good balance: high throughput with controlled database pressure.

Migration Architecture Structure

┌─────────────────────────────────────────────────────────┐

│ Core Engine │

│ ┌─────────────┐ ┌─────────────┐ ┌─────────────┐ │

│ │ Checkpoints │ │ Retries │ │Orchestration│ │

│ └─────────────┘ └─────────────┘ └─────────────┘ │

└─────────────────────────────────────────────────────────┘

│ │

▼ ▼

┌─────────────────────┐ ┌─────────────────────────┐

│ Source Dialect │ │ Target Dialect │

│ ───────────────── │ │ ───────────────────── │

│ DynamoDB S3 Export │ │ PostgreSQL Abstraction │

│ Reader │ │ Layer │

└─────────────────────┘ └─────────────────────────┘

│ │

└──────────────┬─────────────────────┘

▼

┌─────────────────────┐

│ Profile Layer │

│ ───────────────── │

│ Domain-specific │

│ transformation │

│ logic │

└─────────────────────┘

Clear separation of concerns:

Core Engine: Checkpoints, retries, orchestration logic

Source Dialect: DynamoDB S3 export reader

Target Dialect: PostgreSQL abstraction layer

Profile Layer: Domain-specific transformation logic

The engine knows nothing about business logic. The business logic knows nothing about checkpoint mechanics. That makes reuse possible.

Failure Scenarios Considered

The system was designed assuming failure is normal:

| Failure | Recovery Behavior |

| Process crash | Restart from last incomplete file |

| EC2 reboot | Same as process crash |

| Network interruption | Batch retry with exponential backoff |

| Database timeout | Connection pool refresh + retry |

| Malformed row | Isolate via binary search, skip, continue |

| Manual stop | Resume from checkpoint |

In every case, restart behavior is deterministic. That clarity mattered more than optimization.

Tradeoffs Accepted

File-level checkpoints instead of row-level

Single worker instead of parallel

CLI instead of serverless

Continue-on-error for isolated bad records

Each decision favors recovery safety over cleverness.

Migration systems are not where I try to be fancy. They are where I try to sleep well.

What I Would Improve

Failure-injection testing (e.g., Chaos Monkey style)

Better metrics export (Prometheus/Grafana integration)

Possibly safe parallelism with bounded concurrency

The core engine does not need rewriting.

Key Lessons from Large-Scale Data Migration

The hardest part of migration is not moving data. It's designing a system that behaves calmly when things go wrong.

This reinforced:

Idempotency > micro-optimization

Deterministic recovery > precision checkpointing

Simple boundaries > clever orchestration

Observability > elegance

It's not flashy. But it's something I would trust to run overnight.

And that matters more.

Source Code

If you're in a similar situation and want to adapt it:

github.com/ny-randriantsarafara/dataflux

Have questions or built something similar? I'd love to hear about your approach.